Consulting Services

Get the leg up on competition.

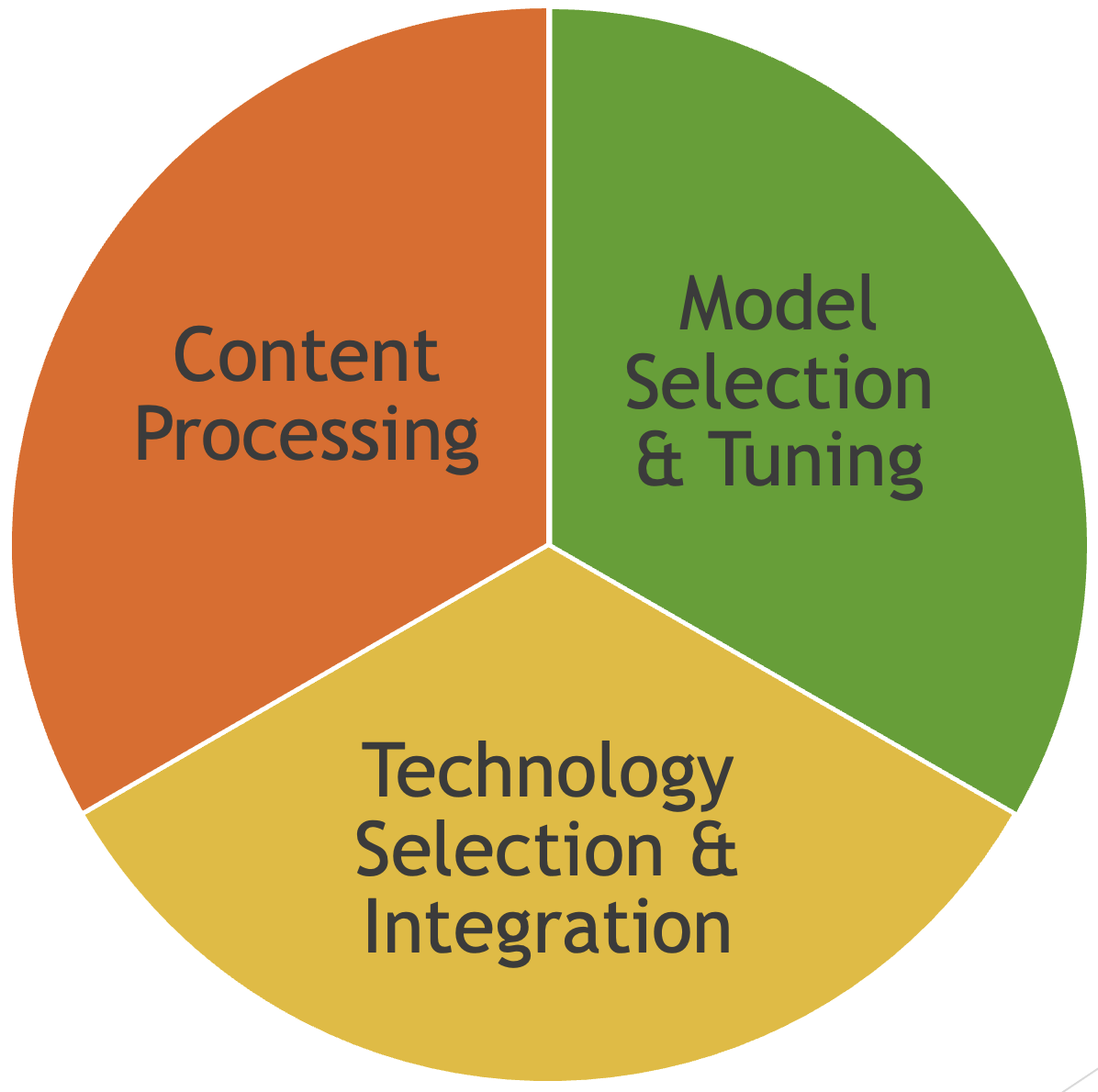

We help bring your products to the forefront of technology. Our experts work along side your team to deliver content understanding, vector/hybrid search solutions, chatbots, and more. Be the disruptor, not the disruptee!

Case Study:See how we directly increased customer satisfaction for complex queries in a world leading Clinical Decisions platform. Watch the video on YouTube.

Explore our services